- Image and Video Editing: Feedbacks/Queries -

Video-Image Editing

Methods and Scripts to Edit Images and Videos

Table of Contents

- Pixels, DPI, PPI and Screen Resolution |:| Digit Recognition and ANN MLP classifications --- Computer Vision | Image Editing using ImageMagick |:| Unsharp Mask [] Image Denoising using ML |*| Rasterize and Vectorize --- Convolution, Edge Detection, Sobel kernel, Smoothen, Sharpen and Intensify Images

- Video Editing --- Image Filtering, Masking and Denoising ---Image Deskewing -|- Audio and Video Codecs | Video Editing using FFmpeg | Simple Rescaling | Create Videos from Images |:| Timeline editing and trimming |:| Crop videos | Overlay videos |:| Concatendate videos | Pillarboxing: add padding to videos |-| Color Effects |:| Freeze Effect [] Overlay videos using MoviePy --- Animations like PowerPoint |:| Box Transition Effect !! Cube Transition |=| Arrow Transition --- Animations using Blender -|- Image and Video Editing using OpenCV |+| PowerBI and Pivot Table

Computer Vision: OpenCV

Image processing is an integral part of computer vision. OpenCV (Open-source Computer Vision Library) is an open source computer vision and machine learning software library. It integrates itself seamlessly with Python and NumPy. In can be installed in Python 3.5 for Windows by command: C:\Windows\System32>py.exe -m pip install -U opencv-python. One can check the version by statement "print(cv2.__version__)" in Python. Python also has a library for image processing called 'Pillow'. Big difference between skimage imread and opencv is that it reads images as BGR instead of RGB (skimage). This link: github.com/BhanuPrakashNani/Image_Processing contains a detailed list (approx 35) of image processing methods and Python codes.- cv2.IMREAD_COLOR: Loads a color image. Any transparency of image will be neglected. It is the default flag. Same as cv2.imread("image_01.png", 1)

- cv2.IMREAD_GRAYSCALE: Loads image in grayscale mode, same as cv2.imread("image_01.png", 0)

- cv2.IMREAD_UNCHANGED: Loads image as such including alpha channel, same as cv2.imread("image_01.png", -1)

- Instead of these three flags, you can simply pass integers 1, 0 or -1 respectively as shown above

- OpenCV and skimage directly store imported images as numpy arrays

- Maintain output window until user presses a key or 1000 ms (1s): cv2.waitKey(1). cv2.waitKey(0) display the window until any key is pressed.

- Destroys all windows created: cv2.destroyAllWindows()

NumPy and SciPy arrays of image objects store information as (H, W, D) order - also designated as axis=0, axis=1 and axis=2 respectively. The values can be transposed as img = transpose(-1, 0, 1) = (D, W, H) = transpose(2, 0, 1). Here, (H, W, D) can be access either by (0, 1, 2) or (-3, -2, -1).

| S. No. | Operation | OpenCV Syntax |

| 01 | Open or read Image | im = cv2.imread("img/bigData.png", 1) |

| 02 | Save or write Image | cv2.imwrite("Scaled Image", imgScaled) |

| 03 | Show or display Image: First argument is window name, second argument is image | cv2.imshow("Original image is", im) |

| 04 | Resize or scale Images | imgScaled = cv2.resize(im, None, fx=2, fy=2, interpolation = cv2.INTER_CUBIC) |

| 05 | Convert images from BGR to RGB | imgRGB = cv2.cvtColor(im, cv2.COLOR_BGR2RGB)* |

| 06 | Show only blue channel of Image | bc = im[:, :, 0]; cv2.imshow("Blue Channel", bc) |

| 07 | Show only green channel of Image | gc = im[:, :, 1]; cv2.imshow("Green Channel", gc) |

| 08 | Show only red channel of Image | rc = im[:, :, 2]; cv2.imshow("Red Channel", rc) |

| 09 | Split all channel at once | bc,gc,rc = cv2.split(im) |

| 10 | Merge channels of the Image | imgMrg = cv2.merge([bc, gc, rc]) |

| 11 | Apply Gaussian Smoothing (Filter) | imgGauss = cv2.GaussianBlur(im, (3,3), 0, borderType = cv2.BORDER_CONSTANT) |

| 12 | Edge detection | imgEdges = cv2.Canny(img, 100, 200) where 100 and 200 are minimum and maximum values |

| 13 | Median Blur | imgMedBlur = cv2.medianBlur(img, 3): kernel size should be an odd number |

| 14 | Get dimensions of an image | height, width, channels = img.shape, channels = img.shape[2] |

* hsvImg = cv2.cvtColor(im, cv2.COLOR_BGR2HSV); h, s, v = cv2.split(hsvImg) and labImg = cv2.cvtColor(im, cv2.COLOR_BGR2LAB); L, A, B = cv2.split(labImg). Here, HSV stands for Hue, Saturation, Value and LAB - Lightness, A (Green to red), B (Blue to Yellow). Hue is the basic color, like red, green, or blue, while saturation is how intense the color is. A grayscale or black-and-white photo has no colour saturation - highly saturated images may look artificial while dealing with natural looks such as plants. img_blurred = cv2.blur(image, (5, 5)) where (5, 5) is the kernel size.

To read-write images: from skimage import io, to apply filters: from skimage import filters or from skimage.filters import gaussian, sobel.

| S. No. | Operation | skimage Syntax |

| 01 | Open or read Image | im = io.imread("img/bigData.png", as_gray=False) |

| 02 | Save or write Image | io.imsave("Scaled Image", imgScaled) |

| 03 | Show or display Image | io.imshow(im) |

| 04 | Resize or scale Images | imgScaled = rescale(img, 2.0, anti_aliasing = False), imgSized = resize(img, (500, 600), anti_aliasing = True) |

| 05 | Convert images from BGR to RGB | imgRGB = cv2.cvtColor(im, cv2.COLOR_BGR2RGB)* |

| 06 | Show only blue channel of Image | bc = im[:, :, 0]; cv2.imshow("Blue Channel", bc) |

| 07 | Show only green channel of Image | gc = im[:, :, 1]; cv2.imshow("Green Channel", gc) |

| 08 | Show only red channel of Image | rc = im[:, :, 2]; cv2.imshow("Red Channel", rc) |

| 09 | Split all channel at once | bc,gc,rc = cv2.split(im) |

| 10 | Merge channels of the Image | imgMrg = cv2.merge([bc,gc,rc]) |

| 11 | Apply Gaussian Smoothing (Filter)** | imgGauss = filters.gaussian(im, sigma=1, mode='constant', cval=0.0) |

| 13 | Median Blur*** | imgMedBlur = median(img, disk(3), mode='constant', cval=0.0) |

| 14 | Get dimensions of an image | w = img.size[0], h = img.size[1] |

** 'sigma' defines the std dev of the Gaussian kernel, different from cv2. In general, standard deviation controls size of the region around the edge pixel that is affected by sharpening. A large value results in sharpening of a wider region around edge and vice versa.

**** from skimage.morphology import disk

Image and Video Enhancement

Before proceeding to Enhancement, let's explore the image basics first: Brightness, Contrast, Alpha, Gamma, Transparency, Hue, Saturation... are few of the terms which should be clearly understood to follow the techniques used for image enhancements. Brightness: it refers to depth (or energy or intensity) of colour with respect to some reference value. Contrast: the difference between maximum and minimum pixel intensity in an image. The contrast makes certain portion of an image distinguishable with the remaining.

Some operations generally performed on images are flip, crop, trim, blur, invert, overlay, mask, rotate, translate, pad, compress, expand... and combination of these. For example, to translate the image towards right keeping the image size constant is a combination of crop and pad operation. Sharpness is contrast (difference) between two different colours. It enhances definition of edge in an image where quick transition from one colour to another convey human vision a 'sharp' change.

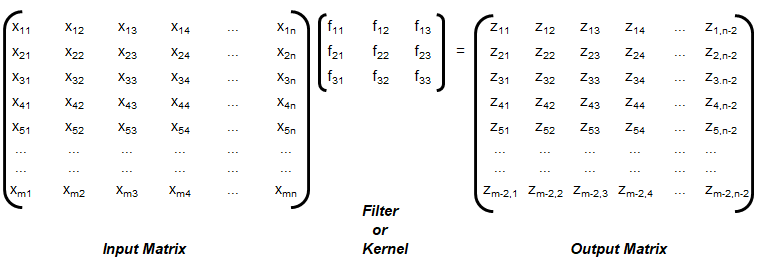

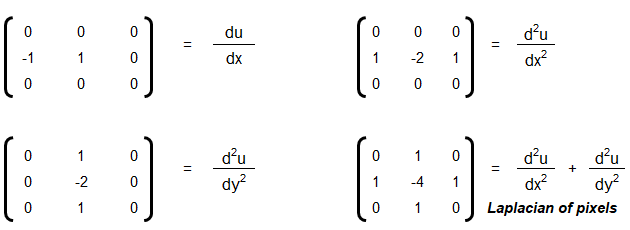

Convolution: This is special type of matrix operation defined below. Convolution is the most widely used method in computer vision problems and algorithms dealing with image enhancements. There matrix 'f' is known as convolution filter or kernel, which is usually 'odd' in size. Strictly speaking the method explained here is cross-correlation. However, this definition is widely used as convolution in machine language applications.

The convolution explained above is known as 'valid', without padding. Note that the size of output matrix has reduced by 2 in each dimension. Sometimes, padding is used where elements or layers of pixels are added all around, that is p rows and p columns are added to the input matrix with (conventionally) zeros. This helps get the output matrix of same size as that of input matrix. This is known as 'same' convolution. Similarly, the "strided convolution" use matrix multiplications in 'strides' or 'steps' where more than 1 rows and columns are stepped in the calculation of zij.

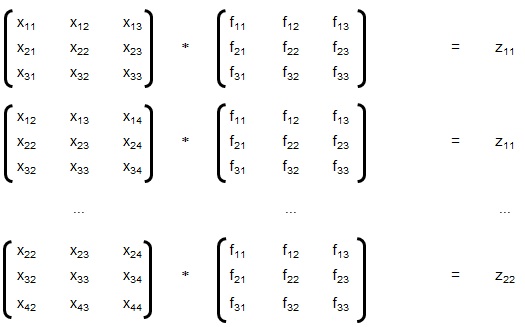

Convolution is a general method to create filter effect for images where a matrix is applied to an image matrix and a mathematical operation (generally) comprising of integers. The output after convolution is a new modified filtered image with a slight blur, Gaussian blur, edge detection... The smaller matrix of numbers or fractions that is used in image convolutions is called a Kernel. Though the size of a kernel can be arbitrary, a 3 × 3 is often used. Some examples of filters are:

Following OCTAVE script produces 7 different type of images for a given coloured image as input.

The Sobel kernel may not be effective at all for images which do not have sharp edges. The GNU OCTAVE script used to generate these image enhancements and convolutions is described here.%In general Octave supports four different kinds of images % gray scale images|RGB images |binary images | indexed images % [M x N] matrix |[M x N x 3] array |[M x N] matrix | [M x N] matrix %class: double |double, uint8, uint16 |class: logical | class: integer %The actual meaning of the value of a pixel in a grayscale or RGB image depends %on the class of the matrix. If the matrix is of class double pixel intensities %are between 0 and 1, if it is of class uint8 intensities are between 0 and 255, %and if it is of class uint16 intensities are between 0 and 65535. %A binary image is an M-by-N matrix of class logical. A pixel in a binary image %is black if it is false and white if it is true. %An indexed image consists of an M-by-N matrix of integers and a Cx3 color map. %Each integer corresponds to an index in the color map and each row in color %map corresponds to an RGB color. Color map must be of class double with values %between 0 and 1.

The elements of a kernel must sum to 1 to preserve brightness: example of valid kernels are kernel_1 = np.array([ [-1, -1, -1], [-1, 9, -1], [-1, -1, -1] ]) ≡ kernel_2 = 1/9*np.array([ [1, 1, 1], [1, 1, 1], [1, 1, 1] ]). gauss_kernel = 1/16 * np.array([ [1, 2, 1], [2, 4, 2], [1, 2, 1] ]). Unweighted smoothing, weighted smoothing (Gaussian blur), Sharpening and Intense Sharpening Kernels respectively are described below

|1 1 1| |0 1 0| | 0 -1 0| |-1 -1 -1| |1 1 1| |1 4 1| |-1 5 -1| |-1 9 -1| |1 1 1| |0 1 0| | 0 -1 0| |-1 -1 -1|

Laplacian Filter: Laplacian filter often refers to the simple 3 × 3 FIR (Finite Impulse Response) filter. The zero-crossing property of the Laplacian filter is used for edge location.

[ 0 −1 0 −1 4 −1 0 −1 0]There are many other filters such as Sepia effect filter, Emboss effect, Mexican hat filter. filter_mex_hat = np.array([ [0, 0, -1, 0, 0], [0, -1, -2, -1, 0], [-1, -2, 16, -2, -1], [0, -1, -2, -1, 0], [0, 0, -1, 0, 0] ]) mexican_hat_img = cv2.filter2D(img, -1, filter_mex_hat). sepia_kernel = np.array([ [0.272, 0.534, 0.131], [0.349, 0.686, 0.168], [0.393, 0.769, 0.189] ]). sepia_img = cv2.transform(img, sepia_kernel).

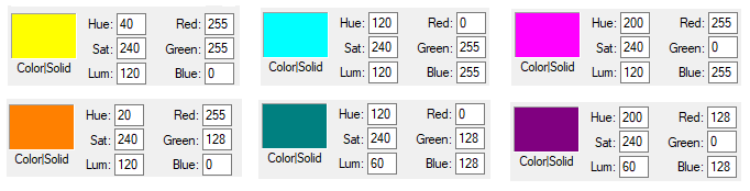

Some standard colours and combination of RGB values are described below. These values can be easily studied and created using MS-Paint, edit colours option.

Pixels, DPI, PPI and Screen Resolution

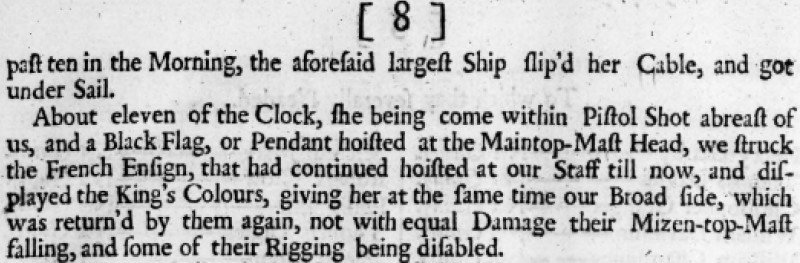

As explained above, images are stored as pixels which are nothing but square boxes of size (in classical definition) 1/72 x 1/72 [in2] with colour intensity defined as RGB combination. However, the dimensions of a pixel are not fixed and is controlled by Pixels per Inch (PPI) of the device. Thus, size of pixel = physical size [inches] of the display / PPI of the display. Or PPI of a display device = 1/(Screen Size) x [(Horizontal Pixels)2 + (Vertical Pixels)2]0.5. Following pictures demonstrate the concept of pixels used in computer through an analogy of colour boxes used by the artist in MS-Excel.

![]()

![]()

NumPy and SciPy arrays of image objects store information as (H, W, D) order (also designated as axis=0, axis=1 and axis=2 respectively. The values can be transposed as img = transpose(-1, 0, 1) = (D, W, H) = transpose(2, 0, 1). Here, (H, W, D) can be access either by (0, 1, 2) or (-3, -2, -1). The EGBA format of image adds alpha channel to describe opacity: α = 255 implies fully opaque image and α = 0 refers to fully transparent image. On a grayscale image, NumPy slicing operation img[:, 10:] = [0, 0] can be used to set 10 pixels on the right side of image to '0' or 'black'. img[:, :10] = [0, 0] sets 10 pixels on the left column to '0'.

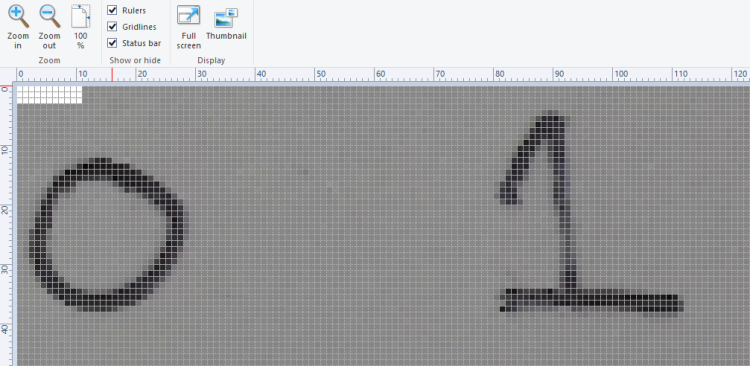

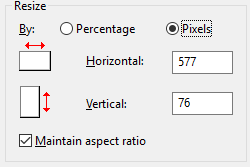

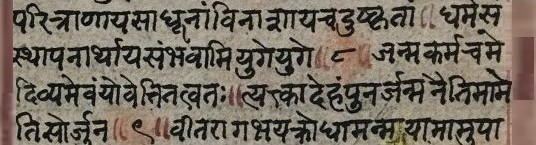

The images when read in OCTAVE and pixel intensities converted into a text file results in following information. Note that the pixel intensity in text file is arranged by walking though the columns, that is the first 76 entries are pixels in first column in vertical direction.

Even though the text file contains one pixel intensity per row, the variables I and G are matrices of size 76 x 577 x 3 and 76 x 577 respectively. The rows with entries "76 577 3" and "76 577" are used to identify the size of the matrices. The portion of image from row numbers from 100 to 250 and column numbers from 500 to 750 can be accessed as image_cropped = image[100:250, 500:750].

As explained earlier, type uint8 stands for unsigned (non-negative) integers of size 8 bit and hence intensities are between 0 and 255. The image can be read back from text file using commands: load("image.txt"); imshow(I); Note the text file generated by this method contains few empty lines at the end of the file and should not be deleted. The text file should have at least one empty line to indicate EOF else it will result in error and the image will not be read successfully.warning: imshow: only showing real part of complex image

warning: called from

imshow at line 177 column 5

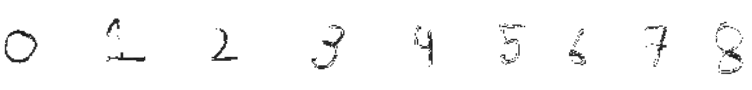

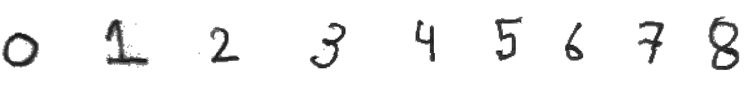

Now, if the pixel intensities above 100 are changed to 255, it results in cleaned digits with sharp edges and white background. In OCTAVE, it is accomplished by statement x(x > 100) = 255. In Numpy, it is x[x > 100] = 255. You can also use & (and) and | (or) operator for more flexibility, e.g. for values between 50 and 100: OCTAVE: A((A > 50) & (A < 100)) = 255, Numpy: A[(A > 50) & (A < 100)] = 255. For a copy of original array: newA = np.where(A < 50, 0, A)

Attributes of Image Data

The images has two set of attributes: data stored in a file and how it is displayed on a device such as projectors. There are terms such as DPI (Dots per Inch), PPI (Pixels per Inch), Resolution, Brightness, Contrast, Gamma, Saturation... This PDF document summarizes the concept of DPI and image size. An explanation of the content presented in the PDF document can be viewed in this video file.

The video can be viewed here.Image File Types: PNG-8, PNG-24, JPG, GIF. PNG is a lossless format with option to have transparency (alpha channel). JPG files are lossy format and quality can be adjusted between 0 to 100%. JPG file cannot have transparency (alpha channel). PNG-8 or 8-bit version of PNG is similar to GIF format which can accommodate 256 colours and this format is suitable for graphics with few colours and solid areas having discrete-toned variation of colours. PNG-24 is equivalent to JPG and is suited for continuous-toned images with number of colours > 256. In effect, a JPG file shall have lesser size (disk space) than PNG with nearly equal or acceptable quality of the image. Screenshots should be saved as PNG format as it will reproduce the image pixel-by-pixel as it appeared originally on the screen.

This Python code uses Pillow to convert all PNG files in a folder into JPG format.

Image Filtering and Denoising

Image filtering is also known as Image Masking. The masking process is similar to a 'mask' or 'cover' we use for our body parts such as face. There are various types of noises that gets into scanned images or even images from digital camera. Gaussian, salt-and-pepper... are few of the names assigned to the type of noises. The concepts applicable to image enhancements are Edge Enhancement = Edge Sharpening, Convolution Filters, Remove Reflection, Glare and Shadow Removal, See-through corrections (visible text of underlying page), Despeckle, Smudge and Stains removal, JPEG compression artefacts, GIF compression pixelization and dot shading...CLAHE (Contrast Limited Adaptive Histogram Equalization) - worked well with for local contrast enhancement. Using different color space, such as HSV/Lab/Luv instead of RGB/BGR helps remove glares. The methods applicable to Document Imaging Applications (scanned texts) may not be effective to Photographic Image Applications (such as X-ray and Computer Tomography Scan images). Some of the requirements of denoising are removal of small noises, deletion of random or lonely pixels, thinning of characters of text in an image... The method to denoise or remove imperfections in an image is generally a two-step process: image segmentation followed by morphological operations.Denoising using skimage, OpenCV: This Python code uses Total Variance method to denoise an image. This method works well for random Gaussian noises but may not yield good result for salt and pepper noise.

| PIL | OpenCV |

| ImageFilter.BLUR | cv2.blur(src, kszie) |

| ImageFilter.MaxFilter(ksize) | cv2.dilate |

| ImageFilter.DETAIL | cv2.filter2D |

| ImageFilter.EDGE_ENHANCE | cv2.Sobel, cv2.Scharr, cv2.Laplacian, cv2.Canny |

| PIL.ImageFilter.EDGE_ENHANCE_MORE | |

| PIL.ImageFilter.EMBOSS | cv2.filter2D |

| PIL.ImageFilter.FIND_EDGES | cv2.Canny |

| PIL.ImageFilter.SMOOTH | cv2.GaussianBlur, cv2.MedianBlur, cv2.bilateralFilter |

| PIL.ImageFilter.SMOOTH_MORE | cv2.GaussianBlur, cv2.MedianBlur, cv2.bilateralFilter |

| PIL.ImageFilter.SHARPEN | cv2.filter2D |

| PIL.ImageFilter.GaussianBlur(radius) | cv2.GaussianBlur(src, ksize, sigmaX) |

This code uses Non-Local Mean (NLM) Algorithm to denoise an image. This method works well for random Gaussian noises but may not yield good result for salt and pepper noise.

OpenCV have algorithms termed as fastNlMeanDenoising with 4 variants: astNlMeansDenoising() - works with a single grayscale images, fastNlMeansDenoisingColored() - works with a color image, fastNlMeansDenoisingMulti() - works with image sequence such as videos (grayscale images), fastNlMeansDenoisingColoredMulti() - same as previous one but for color images. As per document, "Noise expected to be a Gaussian white noise." Gaussian white noise (GWN) is a stationary and ergodic random process with zero mean that is defined by fundamental property: "any two values of GWN are statistically independent no matter how close they are in time." Thus, these methods shall not work well on images containing texts such scanned copies of a textbook.Filter2D for removing speckles and isolated pixels: Blob is a group of connected pixels in an image that share some common property such as area, grayscale value, inertia, circularity...

kernel = np.ones((3,3), dtype=np.uint8)

kernel[1,1] = 0

# Create a sample (image) array that shows the 'blob' features

srcImg = np.array(

[[1,0,1,1,1,0,0,0],

[0,0,0,0,1,1,0,0],

[1,0,1,1,1,1,1,1],

[1,1,1,1,1,1,1,1],

[0,0,1,1,1,0,1,1],

[1,1,1,1,1,1,1,1],

[1,1,1,0,1,1,0,1],

[1,1,1,1,1,1,1,1]], dtype=np.uint8)

#input array needs to be converted to int8 or float32

srcImg = np.float32(srcImg)

mask = cv2.filter2D(srcImg, -1, kernel, borderType=cv2.BORDER_CONSTANT)

srcImg[np.logical_and(mask==8, srcImg==0)] = 1

cv2.imwrite('imgFiltered2D.png', srcImg*255)

| Input Image | Output Image |

|  |

| 3 isolated pixels present | 3 isolated pixels removed |

cv2.filterSpeckles(bw_image_16bit, 'newVal' to paint-off the speckles, 'maxSpeckleSize' maximum number of pixels to be considered in a speckle, 'maxDiff' between neighbor disparity pixels to put them into the same blob) can be used to get similar output.

srcImg = cv2.filterSpeckles(srcImg, 1, 2, 100)[0]. img_blurred = cv2.blur(image, (5, 5)) where (5, 5) is the kernel size.This Python script uses Median Blur and Histogram Equalization to denoise a coloured image. Median Filters do not work well on texts as it filters out (or chip away) portions of characters. For median blur, kernel size should be an odd number else cv2 shall throw an error. Median filters work well on photographic images without text. Filters that are designed to work with gray-scale images shall not work with colour images. scikit-image provides the adapt_rgb decorator to apply filters on each channel of a coloured image.

Excerpt from scikit-image docs: "Removing small objects in grayscale images with a top hat filter: the top-hat transform is an operation that extracts small elements and details from given images. A white top-hat transform is defined as the difference between the input image and its (mathematical morphology) opening."

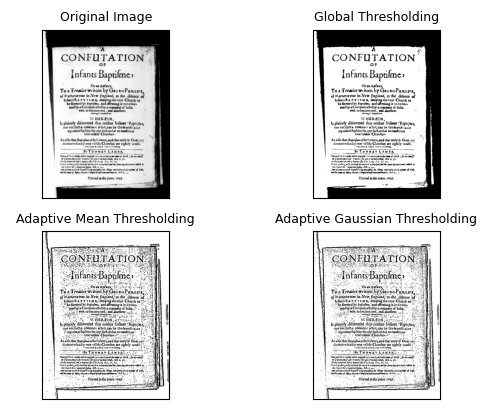

This is another code which uses Dilation, Blurring, Subtraction and Normalization to denoise an image and make the background white. This method applies well on scanned documents containing text and is compared with Adaptive Threshold option available in OpenCV. The adaptive threshold (such as OTSU thresholding) does not require a global threshold value and it can be further improved by splitting (tiling) the image into smaller rectangular segments for local background normalization (as explained in the page leptonica.org/binarization.html). OTSU method is an statistical method which minimizes in-class variance and maximizes between-the-class variance. Here, class refers to "set of pixels belong to a region". "Leptonica is a pedagogically-oriented open source library containing software that is broadly useful for image processing and image analysis applications."

Image Thresholding: This is a process of converting pixel value above or below a threshold to an specified value. This operation can be used to segment an image. For example, a grayscale image can be converted to black-and-white by converting all pixels having intensity value ≤ 64 to 0. Image thresholding is used to change the background of scanned text into white. One can manually calculate the histogram of dominant pixel intensities using cv2.CalcHist() or numpy.histogram or pyplot.hist() from matplotlib which can be further used to define threshold colour intensity value.

The grayscale images contain 256 shades of gray! That means if you have to convert the image into binary black and white, any intensity between 0 and 255 are noise! The thresholding produces jagged (stair-stepped) edges as a rule than exception. This is a common issue with threshold when if text is 'thin', the gaps in the letters appear after threshold. On the other hand when fonts are 'thick', the letters start merging (merged characters and character erosion).The approach to use dilution followed by erosion fuses neighboring texts in the dilution step which cannot be separated in the subsequent erosion step. Increasing the resolution of input image by scaling up and applying dilution followed by erosion are sometimes helpful but not much as the image has to be scaled down again.

def imageThreshold(imgName, thresh_global, blur_kernel, adapt_kernel):

img = cv2.imread(imgName, cv2.IMREAD_GRAYSCALE)

assert img is not None, "File could not be read, check with os.path.exists()"

img_blurred = cv2.medianBlur(img, blur_kernel)

methods = [cv2.THRESH_BINARY, cv2.ADAPTIVE_THRESH_MEAN_C,

cv2.ADAPTIVE_THRESH_GAUSSIAN_C]

ret, thresh_binary = cv2.threshold(img, thresh_global, 255, methods[0])

thresh_adapt_mean = cv2.adaptiveThreshold(img, 255, methods[1],

methods[0], adapt_kernel, 2)

thresh_adapt_gauss = cv2.adaptiveThreshold(img, 255, methods[2],

methods[0], adapt_kernel, 2)

titles = ['Original Image', 'Global Thresholding',

'Adaptive Mean Thresholding', 'Adaptive Gaussian Thresholding']

images = [img, thresh_binary, thresh_adapt_mean, thresh_adapt_gauss]

cv2.imwrite('Gauss_Threshold.png', thresh_adapt_gauss)

return titles, images

Excerpt from scikit-image: "If the image background is relatively uniform, then you can use a global threshold value. However, if there is large variation in the background intensity, adaptive thresholding (or local or dynamic thresholding) may produce better results. Note that local is much slower than global thresholding. Otsu's threshold method can be applied locally. For each pixel, an optimal threshold is determined by maximizing the variance between two classes of pixels of the local neighborhood defined by a structuring element."

def plot_multiple_images(titles, images, n_rows, img_array=True):

n = len(images)

n_cols = math.ceil(n / n_rows)

for i in range(n):

if img_array:

image_i = images[i]

else:

image_i = cv2.imread(images[i])

plt.subplot(n_rows, n_cols, i+1), plt.imshow(image_i, 'gray')

plt.title(titles[i], fontsize=9)

plt.xticks([]), plt.yticks([])

plt.show()

titles, images = imageThreshold('Input.png', 127, 3, 7)

plot_multiple_images(titles, images, 2, True)

for rad in range(1, 4): kernel = cv2.getStructuringElement(MORPH_ELLIPSE, Size(2*rad+1, 2*rad+1)) morph = cv2.morphologyEx(morph, cv2.MORPH_CLOSE, kernel) morph = cv2.morphologyEx(morph, cv2.MORPH_OPEN, kernel)

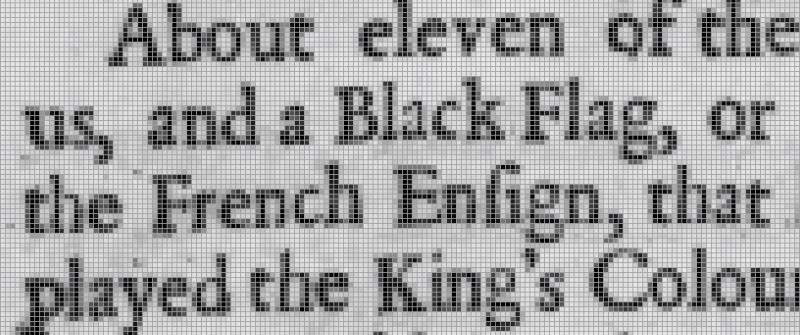

Image Masking

The mask operation works on an input image and a mask image with logical operator such as AND, NAND, OR, XOR and NOT. An XOR (eXclusive OR) operation is true if and only if one of the two pixels is greater than zero, but both pixels cannot be > 0. The bitwise NOT function flips pixel values that is pixels that are > 0 are set to 0, and all pixels that are equal to 0 are set to 255. RGB = [255 255 255] refers to 'white' colour and RGB = [0 0 0] denotes a perfectly 'black' colour.

- AND: A bitwise AND is true (= 1 or 255) if and only if both pixels are > 0. In other words: white + anycolor = anycolor, black + anycolor = black.

- OR: A bitwise OR is true (=1 or 255) if either of the two pixels is > 0. With bitwise_or: white + anycolour = white, black + anycolour = anycolour.

- XOR: A bitwise XOR is true (=1 or 255) if and only if one of the two pixels is > 0, but not both are zero.

- NOT: A bitwise NOT inverts the on (1 or 255) and off (0) pixels in an image.

The list comprehension statement in NumPy can be used to convert image pixels based on threshold directly: img = [0 if img_pixel > thresh_intensity else img_pixel for img_pixel in img]. This is an IF condition inside a FOR loop and it needs to be read backward to understand the logic behind this one liner.

[expression for item in list if conditional]

for item in list:

if conditional:

expression

[expr_1 if condition_1 else expr_2 for item in list]

for item in list:

if condition_1:

expr_1

else:

expr_2Distance Masking: Determine the distance of each pixel to the nearest '0' pixel that is the black pixel. cv2.add(img1, img2) is equivalent to numPy res = img1 + img2. There is a difference between OpenCV addition and Numpy addition. OpenCV addition is a saturated operation while Numpy addition is a modulo operation. cv2.add(250, 25) = min(255, 275) = 255, np.add(250, 25) = mod(275, 255) = 20. Note there is no np.add function, used for demonstration purposes only.

| Input Image | Mask Image | Operation | Outcome of Operation |

| Binary or Grayscale | Binary | OR | Pixels having value 0 in mask set to 0 in output, other pixels from input image retained |

| Input Image | Mask Image | AND | Pixels having value 0 in mask set to 1 or 255 in output, other pixels from input image retained |

Circular Crop: This Python code uses OpenCV to create a circular crop of an image. The input image and cropped image are shown below. In HTML, border-radius property can be set to 50% to make the image cropped to a circle.

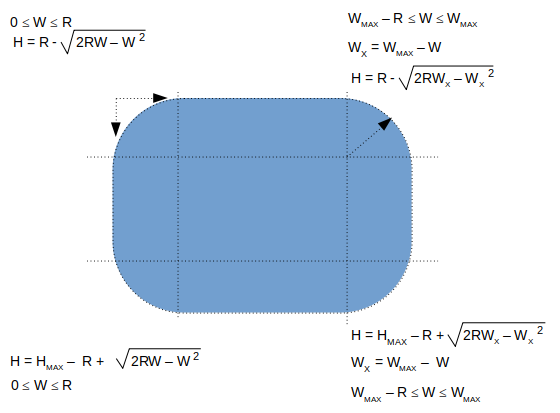

Alternatively, the image can be read into a NumPy array and pixels beyond each channel beyond the disk can be set to desired colour. This Python code uses OpenCV and NumPy array to create a circular crop of an image. The image is read using OpenCV, the BGR channels are extracted as NumPy arrays and then the pixels of each channel are set to white beyond the boundary of circular disk. Finally, BGR channels are merged to create the coloured image. Following image describes the formula required to add a round to the corners of an image

def createRoundedCorners(image, radius):

img = cv2.imread(image)

img_w, img_h = img.shape[1]-1, img.shape[0]-1

for w in range(0, radius):

ht = radius - int(np.sqrt(2*radius*w - w * w))

for h in range(0, ht):

img[h, w] = 255

img[h, img_w - w] = 255

img[img_h - h, w] = 255

img[img_h - h, img_w - w] = 255

return imgUnsharp Mask

Unsharp mark is a bit misnomer as it is used to sharpen an image. Unsharp mask is equivalent to blurred version of image where sharpened image = original image - blurred image. Unsharp mask locates pixels that differ from neighbouring pixels by the specified threshold (delta). It then increases the contrast of the pixels by the amount specified. For pixels within the specified radius, the lighter (or brighter) pixels get lighter (or brighter), the dark ones get darker.Excerpt from scikit-image docs: "Unsharp masking is a linear image processing technique which sharpens the image. The sharp details are identified as a difference between the original image and its blurred version. These details are then scaled, and added back to the original image: enhanced image = original + amount * (original - blurred). The blurring step could use any image filter method, e.g. median filter, but traditionally a Gaussian filter is used. The radius parameter in the unsharp masking filter refers to the sigma parameter of the Gaussian filter."

Connected Component Labeling

This Python script can be used to update an image background to white. This code uses Connected Component Labeling (CCL) method to remove the dark patches. The code customised for all images inside a folder can be found here. In case the text contains shadow in the background, the gray-scale image, the contrast has to be adjusted to accentuate the dark grays from the lighter grays - this can be achieved by imgGray = cv2.multiply(imgGray, 1.5) though the multiplier 1.5 used here needs to be worked out by trial-and-error. 1.1 is a recommended start value. Gaussian Blur and morphological operations such as erosion and dilation would be required to make the text sharper: kernel = np.ones((2, 1), np.uint8), img = cv2.erode(img, kernel, iterations=1). "Using Machine Learning to Denoise Images for Better OCR Accuracy" from pyimagesearch.com is a great article to exploit the power of ML to denoise images containing dominantly texts and noises. Before applying CCL, the image has to be converted into a binary format: threshImg = cv2.threshold(grayImg, 0, 255, cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1] can be used for this. cv2.THRESH_BINARY_INV applies threshold and inverts image colour to black background and white foreground.

nLables, labels, stats, centroids = cv2.connectedComponentsWithStats( imgBlackBackground, 8, cv2.CV_32S ) or outputCCWS = cv2.connectedComponentsWithStats(...) and (nLables, labels, stats, centroids) = outputCCWS, 8 is connectivity type (another option is 4), CV_32S is output image label type

- nLabels = total number of unique labels. The background is label 0, and the additional objects are numbered from 1 to nLabels-1.

- labels = list of masks created from connected components (CC) - spatial dimensions same as input image

- stats = statistical information of CC: cv2.CC_STAT_LEFT = stats[:, 0], cv2.CC_STAT_TOP = stats[:, 10], cv2.CC_STAT_WIDTH = stats[:, 2], cv2.CC_STAT_HEIGHT = stats[:, 3], cv2.CC_STAT_AREA = stats[:, 0] = stats[:, 4]

- Get maximum area of CC: max(stats[1:, -1]). Array of areas of connected components can be created using outputCCWS[2][:, 4].

- Get CC with 3 highest areas: sorted(stats[1:, -1], reverse=True)[:3]

- Get second highest area of CC: max( stats[1:, -1].remove(max(stats[1:, -1])) ) - note that this is a destructive approach and the items from list get deleted.

- Save ith CC label: cclMask = (labels == i).astype("uint8") * 255, cv2.imwrite( "ccComp_i.png", cv2.bitwise_or( cclMask)). Bitwise OR operation is needed to invert background colour to white - note that cv2.THRESH_BINARY_INV was used during threshold operation.

- Further dilation and/or erosion operation may need to be performed on each of the Connected Components to remove isolated pixels, tiny holes and irregular shapes.

contours_sorted = sorted(contours, key = cv2.contourArea, reverse = True)

for c in contours_sorted[1:-1]:

x, y, w, h = cv2.boundingRect(c)

input_img[y:y+h, x:x+w] = 255

cv2.imwrite('imgDespecled.png', input_img)

ImageMagick has options to use Connected Component Labelling technique to remove noises such as random pixels and despeckle an image. Excerpts from webpage: "Connected-component labelling (alternatively connected-component analysis, blob extraction, region labelling, blob discovery, or region extraction) uniquely labels connected components in an image. The labelling process scans the image, pixel-by-pixel from top-left to bottom-right, in order to identify connected pixel regions, i.e. regions of adjacent pixels which share the same set of intensity values."

Find background colour of an image: there is no unique and universal method to find the background colour of an image. One direct approach is to get the pixel value with the highest occurrence. This may not yield correct value if two pixels are nearly equal. Depending upon type of image, the method can be adjusted. For example, an image containing (lot of) texts only, the method to find pixel value of highest occurrence may give correct value of background color. Alternatively, in such images when there are no or sparse noises present, finding the pixel of maximum continuous occurrence in each row or column shall yield (universally) correct background colour.

Image Denoising using ML: Noise2Void is a widely used denoising algorithm, and is readily available from the n2v python package which isbased on TensorFlow. Refer: github.com/CAREamics/careamics - "CAREamics is a PyTorch library aimed at simplifying the use of Noise2Void and its many variants and cousins (CARE, Noise2Noise, N2V2, P(P)N2V, HDN, muSplit)." Articles describing the underlying principles are [1]"Noise2Noise: Learning Image Restoration without Clean Data" by Jaakko Lehtinen et al. [2]"Noise2Void - Learning Denoising from Single Noisy Images" by Alexander Krull et al. [3]"Noise2Self: Blind Denoising by Self-Supervision" by Joshua Batson and Loic Royer.

Pixel Multiplication

Also known as Graylevel scaling (and not same as geometrical scaling), this operation can be used to brighten (scaling factor > 1) or darken (scaling factor < 1) an image. If the calculate value of pixel after multiplication is > maximum allowed value, it is either truncated to the maximum value or wrapped-around the minimum allowed pixel value. For example, a pixel value of '200' when scaled by a factor 1.3, the new value of 260 shall get truncated to 255 or wrapped to 5 (= 260 - 255).There is similar operation "Image Segmentation with Distance Transform and Watershed Algorithm" available at docs.opencv.org/3.4/d2/dbd/tutorial_distance_transform.html. Here, OpenCV function distanceTranform is used to obtain derived representation of a binary image, where the value of each pixel is replaced by its distance to the nearest background pixel.

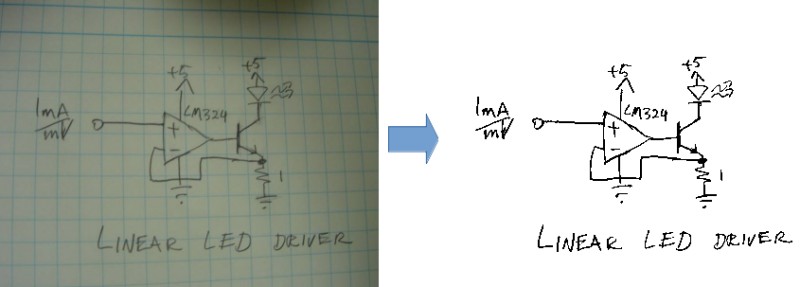

Adapted from stackoverflow.com/../what-processing-steps-should-i-use-to-clean-photos-of-line-drawings, the Python + OpenCV code attached here can be used to clean image as shown below.

Morphological Operations

Morphology refers to "the study of the forms / shape / structure of things". In linguistics, it is study about pattern of word formation (inflection, derivation, and compounding). Image processing methods that transform images based on shapes are called Morphological Transformations. Erosion is the morphological operation that is performed to reduce the size of the foreground object. Dilaton is opposite of erosion. Thus, thickness of fonts can be reduced using erosion and vice versa. Bright regions in an image tend to “get brighter” after Dilation, which usually results in an enhanced image. Removing noise from images is one of the application of morphological transformations. Morphological operators require Binary Images which are images whose pixels have only two possible intensity values. They are normally displayed as black and white and the two values are 0 for black, and either 1 or 255 for white.

Erosion is also known as minimum filter which replaces or removes objects smaller than the structure (thinning operation - removes foreground pixels). Similarly, dilation is called maximum filter (thickening operation - adds foreground pixels). A structuring element or kernel is a simple shape used to modify an image according to the shape locally fits or misses the image. A structuring element is positioned all possible locations in the image and thus sometime may not fit on boundary pixels. Note that morphological operations such as Erosion and Dilation are based on set operations whereas convolutions are based on arithmetic operations.

Tutorial at docs.opencv.org titled "Extract horizontal and vertical lines by using morphological operations" demonstrates how horizontal lines can be removed as shown below.

Excerpt from docs.opencv.org: "During erosion a pixel in the original image (either 1 or 0) will be considered 1 only if all the pixels under the kernel is 1, otherwise it is eroded (made to zero)." Excerpt from pyimagesearch.com: "A foreground pixel in the input image will be kept only if all pixels inside the structuring element are > 0. Otherwise, the pixels are set to 0 (i.e. background)." From OpenCV tutorial: "The kernel B has a defined anchor point, usually being the center of the kernel. As the kernel B is scanned over the image, we compute the maximal pixel value overlapped by B and replace the image pixel in the anchor point position with that maximal value. As you can deduce, this maximizing operation causes bright regions within an image to "grow" (therefore the name dilation)."

As you may have realized, none of the 3 definitions quoted above is clear where the use of 0 and 1 for boolean and pixel intensities are mixed-up. Let's see the effect of kernel and erosion with following examples.

| erosion = cv2.erode(img, np.ones((3, 3), np.uint8), iterations = 1) | ||

| Image with black background | Image after erosion | Image after Dilation |

|  |  |

| Image with white background | Image after erosion | Image after Dilation |

|  |  |

| As evident now, erosion adds black pixels and depending upon the background colour, the thickening (white background) or thinning (black background) effect can be observed. | ||

A key consideration while using morphological operations is the background colour of the image. Should it be white or black? Is the kernel definition dependent on whether background of image is white or black? docs.opencv.org recommends: "(Always try to keep foreground in white)". If you have an image with white background, in order to comply this recommendation, use whiteForeground = cv2.bitwise_not( blackForeground ) before erosion and then blackForeground = cv2.bitwise_not( whiteForeground ) after erosion. This short piece of code describes these steps.

A = Image, B = Kernel --- Erosion = A ⊖ B, Dilation: A ⊕ B, Opening: A o B = (A ⊖ B) ⊕ B, Closing: A ⊚ B = (A ⊕ B) ⊖ B. A combination of morphological operations can be used for smoothing and dirt removal. For example: convert image background to black -> dilate to remove white dots (lonely pixels in original image) -> erode to bring text to original thickness -> invert background colour of image back to white. The operations can be chain-combined as: k_blur = ((3, 3), 1), k_erode = (5, 5), k_dilate = (3, 3). cv2.imwrite( 'imgMorphed.png', cv2.dilate( cv2.erode( cv2.GaussianBlur( cv2.imread('Input.png', 0) / 255, k_blur[0], k_blur[1]), np.ones(k_erode)), np.ones(k_dilate) ) * 255 ).

cv2.getStructuringElement(cv2.MORPH_RECT, (50,1)): this can be used to create a horizontal line to remove such lines from an image. cv2.MORPH_RECT, (1, 50) can be used to create a vertical line.

Rectangular Kernel: cv2.getStructuringElement(cv2.MORPH_RECT,(3,3))

array([[1, 1, 1],

[1, 1, 1],

[1, 1, 1]], dtype=uint8)

Elliptical Kernel: cv2.getStructuringElement(cv2.MORPH_ELLIPSE,(5,5))

array([[0, 0, 1, 0, 0],

[1, 1, 1, 1, 1],

[1, 1, 1, 1, 1],

[1, 1, 1, 1, 1],

[0, 0, 1, 0, 0]], dtype=uint8)

Cross-shaped Kernel: cv2.getStructuringElement(cv2.MORPH_CROSS,(3,3))

array([[0, 1, 0],

[1, 1, 1],

[0, 1, 0], dtype=uint8)

Main Function

import cv2, sys

import numpy as np

def imgMorphOperation(imgName, kernel_size, imgType='File', morph='Opening'):

#imgType = 'File' or NumPy 'Array' if already loaded by cv2.imread

#morph = 'Opening', 'Closing', 'Dilation', 'Erosion'

if imgType == 'File':

img = cv2.imread(imgName, cv2.IMREAD_GRAYSCALE)

else:

img = imgName

# Otsu's thresholding

ret,img = cv2.threshold(img, 0, 255, cv2.THRESH_BINARY+cv2.THRESH_OTSU)

kernel = np.ones((kernel_size, kernel_size), np.uint8)

if morph == 'Opening':

imgMorphed = cv2.morphologyEx(img, cv2.MORPH_OPEN, kernel)

elif morph == 'Erosion':

imgMorphed = cv2.erode(img, kernel, iterations = 1)

elif morph == 'Dilation':

imgMorphed = cv2.dilate(img, kernel, iterations = 1)

elif morph == 'Closing':

imgMorphed = cv2.morphologyEx(img, cv2.MORPH_CLOSE, kernel)

else:

print("\nMorphological operation not defined! Exiting. \n")

sys.exit()

return imgMorphed

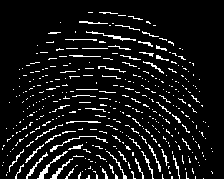

Original Image: Reference: "Morphological Image Processing" by Preechaya Srisombut, Graduate School of Information Sciences and Engineering,Tokyo Institute of Technology

imgName = 'fingerPrintWithNoise.png'

imgEroded = imgMorphOperation(imgName, 3, imgType='File', morph='Erosion')

cv2.imwrite('fingerPrintEroded.png', imgEroded)

Image after First Erosion

imgDilated1 = imgMorphOperation(imgEroded, 3, imgType='Array', morph='Dilation')

cv2.imwrite('fingerPrintDilate1.png', imgDilated1)

Image after First Dilation

imgDilated2 = imgMorphOperation(imgDilated1, 3, imgType='Array', morph='Dilation')

cv2.imwrite('fingerPrintDilate2.png', imgDilated2)

Image after Second Dilation

imgFinal = imgMorphOperation(imgDilated2, 3, imgType='Array', morph='Erosion')

cv2.imwrite('fingerPrintDenoised.png', imgFinal)

Denoised Image after Second Erosion

Image deskewing

First thing first: to deskew an image, remove the noise especially around the boundary or edges of the image else the contour of largest area shall be the one formed by continuous dark patches at the edges (almost the size of the image itself). This is the method of straightening a rotated image - a mandatory step in image pre-processing before feeding the cleaned-up image to an Optical Character Recognition (OCR) tool. The recommended steps are described below:- Convert the image to gray scale

- Apply slight blurring to decrease intensity of noise in the image

- Invert and maximize the colors of image by thresholding to make text block detection easier, thus making the text white and changing background to black

- Merge all printed characters of the block via dilation (expansion of white pixels) to find text blocks

- Use larger kernel on X axis to get rid of all spaces between words and a smaller kernel on Y axis to blend in lines of one block between each other, but keep larger spaces between text blocks intact

- Find areas of the text blocks of the image

- Use simple contour detection with minimum area rectangle for all the block of text

- Determine skew angle: angle the texts need to be rotated to make them aligned to page: there are various approaches to determine skew angle, such as average angle of all text blocks, angle of the middle block or average angle of the largest, smallest and middle blocks. Simple one using the largest text block works fine in most of the cases.

import cv2

import numpy as np

def cleanTextImage(img, min_area: int):

'''

Ref: stackoverflow.com/ ... /clean-text-images-with-opencv-for-ocr-reading

This code requires (assumes) properly binarized white-on-black image (e.g.

after grayscale conversion, black hat morphing and Otsu's thesholding).

'''

num_comps, labeled_pix, comp_stats, comp_centroids = \

cv2.connectedComponentsWithStats(img, connectivity=4)

# Get indices/labels of the remaining components based on the area stat

# (skip the background component at index 0)

comp_labels = [i for i in range(1,num_comps) if comp_stats[i][4] >= min_area]

# Filter the labeled pixels based on the remaining labels,

# assign pixel intensity to 255 (uint8) for the remaining pixels

cln_img = np.where(np.isin(labeled_pix,comp_labels)==True,255,0).astype('uint8')

return cln_img

Usage:

_,img = cv2.threshold(cv2.imread('In.jpg',0), 0,255,cv2.THRESH_BINARY+cv2.THRESH_OTSU)

cv2.imwrite('cln_img.jpg', cleanTextImage(img, 10))Template Matching

Similar to erosion and other morphological operations, there is another utility named Template Matching where an image template slides (similar to kernel or structuring elements - moving the patch one pixel at a time: left to right, up to down) and compares the template and overlapping patch of input image. At each location, a metric is calculated so it represents how "good" or "bad" the match at that location is (or how similar the patch is to that particular area of the source image). It returns a grayscale image, where each pixel denotes how much does the neighbourhood of that pixel match with template. The brighter pixels representing good match will have a value closer to 1, whereas a relatively darker pixel representing not so good match will have a value close to 0. If input image has the size (W x H) and template image is of size (w x h), output image will have a size of (W-w+1, H-h+1).The value of match and location of match from the metric calculated by cv2.matchTemplate() can be retried by min_val, max_val, min_loc, max_loc = cv2.minMaxLoc(result) to get the minimum and maximum value of 'match' as well the coordinates indicating the "top-left, bottom-right" corners for the bounding box. min_loc and max_loc are touples of (x, y).

import cv2 as cv

import numpy as np

img_rgb = cv.imread('NoisyImage.png')

img_gray = cv.cvtColor(img_rgb, cv.COLOR_BGR2GRAY)

imgTemplate = np.array([[1, 1, 1, 1], [1, 0, 0, 1],

[1, 0, 0, 1], [1, 1, 1, 1]], np.uint8) * 255

w, h = imgTemplate.shape[::-1]

res = cv.matchTemplate(img_gray, imgTemplate, cv.TM_CCOEFF_NORMED)

# If multiple occurrences of match found, cv.minMaxLoc() won't give all

# the locations. In that case, thresholding needs to be used.

threshold = 0.8

loc = np.where(res >= threshold)

for pt in zip(*loc[::-1]):s

#Add rectangle with red lines around matched patches

#cv.rectangle(img_rgb, pt, (pt[0] + w, pt[1] + h), (0,0,255), 1)

# Change pixels in the matched patches to white (255)

img_gray[pt[1]:pt[1] + h, pt[0]:pt[0] + w] = 255

cv.imwrite('imageMatched.png', img_gray)

If np.uint8 is ignored in imgTemplate array definition, following error occurs in statement: res = cv.matchTemplate(img_gray, imgTemplate, cv.TM_CCOEFF). "cv2.error: OpenCV(4.7.0) /io/ opencv/ modules/ imgproc/ src/ templmatch.cpp: 1164: error: (-215: Assertion failed) (depth == CV_8U || depth == CV_32F) && type == _templ.type() && _img.dims() <= 2 in function 'matchTemplate'"

Find Contours

From docs.opencv.org/ ... /tutorial_py_contours_begin.html: Contours are curves joining all the continuous points (along the boundary), having same color or intensity. The contours are a useful tool for shape analysis and object detection and recognition. In OpenCV, finding contours is like finding white object from black background. So object to be found should be white and background should be black.

cv2.findContours() function is used to detect objects in an image. Usage: imgCont, contours, hierarchy = cv.findContours(threshold, cv.RETR_TREE, cv.CHAIN_APPROX_SIMPLE) where imgCont is modified image returned from findContour operation, contours is a Python list of all the contours in the image (each contour as a Numpy array of (x,y) coordinates of boundary points of the object). It accepts argument to specify Contour Retrieval Mode and Contour Approximation Method. This is especially useful to identify nested contours (object inside another object).cv2.RETR_LIST: It simply retrieves all the contours, but doesn't create any parent-child relationship thus they all belongs to same hierarchy level. cv2.RETR_TREE: It retrieves all the contours and creates a full family hierarchy list. cv2.RETR_CCOMP: This flag retrieves all the contours and arranges them to a 2-level hierarchy. Thus, external contours of the object (i.e. its boundary) are placed in hierarchy-1 and the contours of holes inside object (if present) is placed in hierarchy-2. cv2.RETR_EXTERNAL: If you use this flag, it returns only extreme outer flags. All child contours are left behind.

cv2.CHAIN_APPROX_SIMPLE removes all redundant points and compresses the contour for example for straight lines only end points are needed. cv2.CHAIN_APPROX_NONE stores all boundary points.

Draw Contours

cv.drawContours(img, contours, -1, (255, 255, 0), 2): cv2.drawContours function is used to draw any shape for which the boundary points are known. Its first argument is source image, second argument is the contours which should be passed as a Python list, third argument is index of contours (useful when drawing individual contour, -1 can be used to draw all contours), next argument is color and the last argument is thickness of boundary lines.Following function can be used to remove all horizontal lines from an image. Vertical lines can be removed by changing (kernel_size, 1) to (1, kernel_size).

def imgRemoveHorizLines(imgName, imgType='File', kernel_size=50):

if imgType == 'File':

image = cv2.imread(imgName)

else:

image = imgName

imGray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

thresh = cv2.threshold(imGray, 0,255, cv2.THRESH_BINARY_INV + cv2.THRESH_OTSU)[1]

hr_krnl = cv2.getStructuringElement(cv2.MORPH_RECT, (kernel_size, 1))

rm_hr = cv2.morphologyEx(thresh, cv2.MORPH_OPEN, hr_krnl, iterations=2)

cnts = cv2.findContours(rm_hr, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

if len(cnts) == 2:

cnts = cnts[0]

else:

cnts = cnts[1]

for c in cnts:

cv2.drawContours(image, [c], -1, (255,255,255), 3)

cv2.imwrite('imgHorizLinesRemoved.png', image)

return image

RUN LENGTH SMOOTHING ALGORITHM (RLSA): block segmentation and text discrimination method used in Document Image Processing to extract the region of interest (ROI) such as block-of-text, title, and content. This method can be used to remove text in images where content from next / previous page gets added while scanning pages from the books.

Animations using Python

Convert PNG to Animated GIF: Click here to get a Python script to convert a set of PNG file to animated GIF.

There are few requirements to be kept in mind while using VideoWriter in OpenCV else video writing fails silently - no valid video file is written: [1] All frames should of same size including number of channels [2] All images should be either coloured or black-and-white [3] If input images are monochrome (black and white or grayscale) type, it should be indicated with argument isColor = False or '0' such as cv2.VideoWriter('output.avi', fourcc, 25, size, 0) [4] The images must be in BGR format (and not RGB) or it will not write any video [5] FFmpeg should be configured properly [6] Try different codecs and see which one works or use "-1" in place of the codec flag and select one from the list [7] frameSize required by cv2.VideoWriter is (width, height) and not (height, width) which is the shape of image array resulting from cv2.imread or Image.open [8] Specify frames per second or FPS argument as type float [9] If folder or path does not exist, VideoWriter will fail silently.Animation of a sine wave. The Python code can be found here.

def rotateImageInCircle(images, icon_w, icon_h, radius):

num_images = len(images)

frame_w = ((icon_w + 2 * radius) // 2) * 2

frame_h = ((icon_h + 2 * radius) // 2) * 2

xc = frame_w // 2

yc = frame_h // 2

img_frames = []

for theta in range(360):

frame = np.ones((frame_h, frame_w, 3), dtype=np.uint8)*255

for i, image in enumerate(images):

img = cv2.resize(cv2.imread(image), (icon_h, icon_w))

angle = (i/num_images + theta/360) * 2 * np.pi

x = int(xc + radius * np.cos(angle))

y = int(yc + radius * np.sin(angle))

img_x = x - icon_w // 2

img_y = y - icon_h // 2

img_x = max(0, min(img_x, frame_w - icon_w))

img_y = max(0, min(img_y, frame_h - icon_h))

frame[img_y:img_y + icon_h, img_x:img_x + icon_w] = img

img_frames.append(frame)

return frame_w, frame_h, img_framesImage to PDF

This Python code converts all the images stored in a folder into a PDF file.

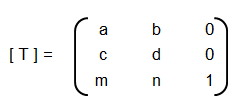

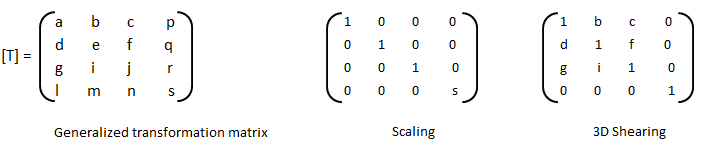

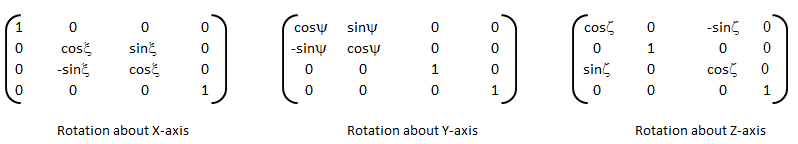

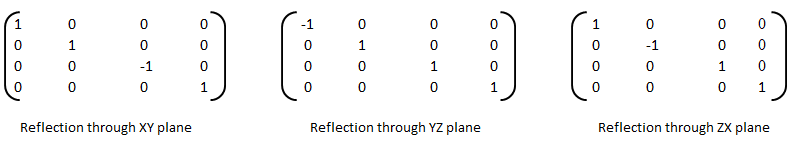

Coordinate Transformation Matrices

If {X} is a row vector representing a 2D or 3D coordinates of a point or pixel, {X'} = [T] {X} where [T] is the transformation matrix. Thus:

| Operation | a | b | c | d | Remark |

| Scaling | ≠ 0, ≠ 1 | 0 | 0 | ≠ 0, ≠ 1 | |

| Reflection about y-axis | -1 | 0 | 0 | 1 | |

| Reflection about x-axis | 1 | 0 | 0 | -1 | |

| Reflection about origin | < 0 | 0 | 0 | < 0 | |

| Shear | 0 | ≠ 0, ≠ 1 | ≠ 0, ≠ 1 | 0 | |

| Rotation: 90°CCW about origin | 0 | 1 | -1 | 0 | |

| Rotation: 180°CCW about origin | -1 | 0 | 0 | -1 | |

| Rotation: 270°CCW about origin | 0 | -1 | -1 | 0 | |

| Rotation: θ CCW about origin | cosθ | sinθ | -sinθ | cosθ | |

| Reflection about x-axis | -1 | 0 | 0 | 1 | |

| Reflection about x-axis | 1 | 0 | 0 | -1 | |

| Reflection about y = x | 0 | 1 | 1 | 0 | |

| Reflection about y = -x | 0 | -1 | -1 | 0 |

Rotation is assumed to be positive in right hand sense or the clockwise as one looks outward from the origin in the direction along the rotation axis. The right hand rule of rotation is also expressed as: align the thumb of the right hand with the positive direction of the rotation axis. The natural curl of the fingers gives the positive rotation direction. Note the the x-coordinate of the position vector will not change if rotation takes place about x-axis, y-coordinate of the position vector will not change if rotation takes place about y-axis and so on.

Scaling: if a = d and b = c = 0, uniform scaling occurs. A non-uniform expansion or compression will result if a = d > 1 or a = d < 1 respectively. Scaling looks like an apparent translation because the position vectors (line connecting the points with origin) are scaled and not the points. However, if the centroid of the image or geometry is at the origin, a pure scaling without apparent translation can be obtained.

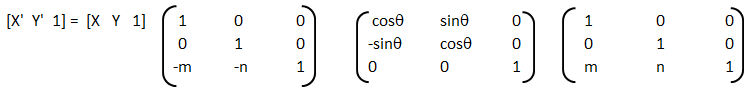

Homogeneous coordinates: in the transformation matrices described in the table above, the origin of the coordinate system is invariant with respect to all of the transformation operations. The concept of homogenous coordinate system is used to obtain transformations about an arbitrary point. The homogenous coordinates of a non-homogeneous position vector {X Y} are {X' Y' h} where X = X'/h and Y = Y'/h and h is any real number. Usually, h = 1 is used for convenience though there is no unique representation of a homogenous coordinate system. Thus, point (3, 5) can be represented as {6 10 2} or {9 15 3} or {30 50 10}. Thus, a general transformation matrix looks like shown below. Note that every point in a two-dimensional plane including origin can be transformation (rotated, reflected, scaled...).

[T] = [T'] [R] [R'] [R]-1 [T']-1

Thus, the steps are:- Translate the line and the object both so that the line passes through the origin: the matrix [T'] in above concatenated matrix [T]

- Rotate the line and the object about the origin so that the line coincides with one of the coordiante axis: the matrix [R]

- Reflect the object about the line - the coordinate axis it coincides with: the matrix [R']

- Apply the inverse rotation above the origin using: the matrix [R]-1

- Apply the inverse translation to the original location: the matrix [T]-1

3D Transformations

The three-dimensional representation and display of an object is necessary for the understanding of the shape of the object. Additionally, the ability to translate (pan), rotate (dolly), scale (zoom), reflect and project help understand the shape of the object. Analogous to homogeneous coordinate system in 2D, a point in 3D space {X Y Z} can be represented by a 4D position vector {X' Y' Z' h} = {X Y Z 1} [T] where [T] is the transformation matrix similar to the one used in 2D case. A generalized 4 × 4 transformation matrix for 3D homogeneous coordinates is:

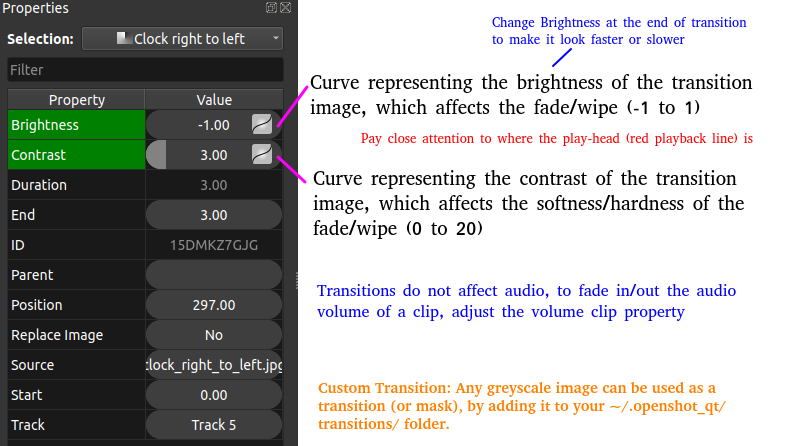

- Alpha Mask / Wipe Transition: Uses a grayscale mask image to gradually wipe / transition between 2 images. Alpha = Transparency

- Bars: Add colored bars around your video

- Blur/Sharpen: Adjust the blur/sharpness of the frame’s image - ffmpeg -i in.mp4 -vf "unsharp= luma_msize_x=7: luma_msize_y=7: luma_amount=2.5: enable= 'between(t, 2, 3)'" -y out.mp4

- Adjust the brightness and contrast of the frame’s image: A value of -1 for brightness makes the image black and value of +1 makes it white. Intermediate values can be used to adjust brightness. The value of contrast varies between -128 to +128 in OpenShot and controls sharpness or intensity or depth of the colour.

- Caption: Add text captions on top of your video - background alpha, padding, corner radius, font alpha, font size, text location... are some of the options available in OpenShot

- Chroma Key (Greenscreen): Replaces the color (or chroma) of the frame with transparency (i.e. keys out the color). This can be used to remove background colours in screen recording with web-cam such as OBS Studio. However, the background colour should be of any single uniform colour and not the green colour only.

- Color Saturation: Adjust the color saturation, 0 means black-and-white image and higher value increase the intensity of the colours

- Color Shift: Shift the colors of an image up, down, left, and right (with infinite wrapping)

- Crop: Crop out any part of your video

- Deinterlace: Remove interlacing from a video (i.e. even or odd horizontal lines)

- Hue: Adjust the hue / color of the frame’s image - this is used to create Colour Change Effect

- Negative: Negates the colors, producing a negative of the image - OpenShot does not provide any control on the attributes of the negative colors of the image

- Object Detector: Detect objects through the video

- Pixelate: Pixelate (increase or decrease) the size of visible pixels that is the effect reduces PPI of the image and hence it blurs the image

- Shift the image up, down, left, and right (with infinite wrapping) - this option separates and shows the 3 colour channels by horizontal and/or vertical shift specified - also known as Glitch Effect sometimes

- Stabilizer: Stabilize video clip to remove undesired shaking and jitter

- Wave: Distort the frame’s image into a wave pattern

Similarly, transition as the name suggests, indicates the method or style to switch between one clip (or frame) to another, such as to gradually fade (or wipe) between two clips. Thus, the most common location of a 'transition' is either at the start or end of the clip or image or keyframe. Direction of transitions adjust the alpha or transparency of the clip below it, and can either fade from opaque (dark) to transparent (default), or transparent to opaque. One interesting animation shown below is 'Cube' transition in PowerPoint and LibreOffice Impress which ensures continuity of information.

Following Python code generate frames to create Cube transition.

def frames_cube_transition(img1, img2, img_w, img_h, steps=50):

transition_frames = []

for i in range(steps):

alpha = i / steps

angle = alpha * np.pi/2

m1 = np.float32([[1, 0, -img_w* np.sin(angle)], [0, 1, 0]])

m2 = np.float32([[1, 0, img_w*(1-np.sin(angle))], [0, 1, 0]])

frame1 = cv2.warpAffine(img1, m1, (img_w, img_h))

frame2 = cv2.warpAffine(img2, m2, (img_w, img_h))

combined_frame = np.maximum(frame1, frame2)

transition_frames.append(combined_frame)

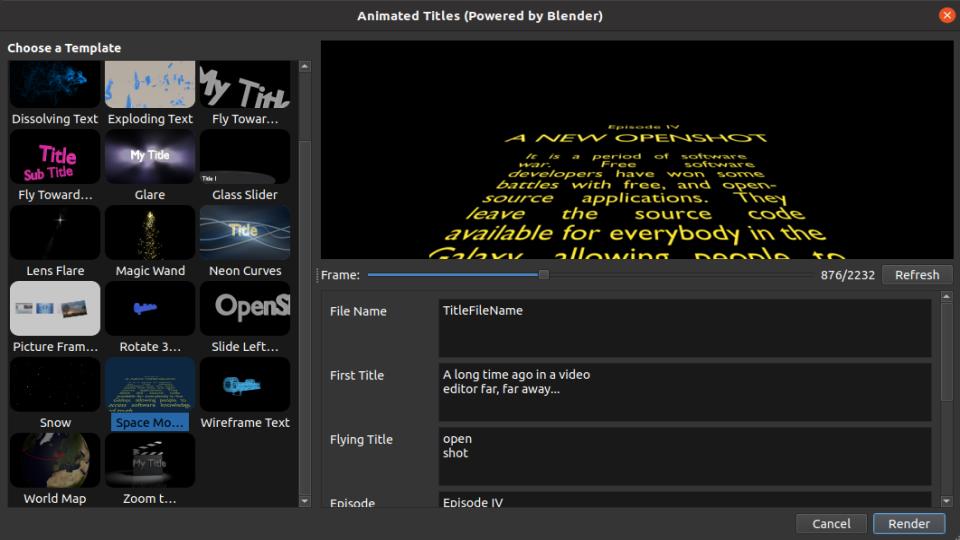

return transition_framesMost of the open source program are better at dealing with images. However, the flexibility to deal with text are less and sometimes limited options are there. For example, to add scrolling credits a long text object is moved vertically to make it appear to be scrolling: appearing from the bottom and disappearing through the top. Blender version 3.6.1 does not have this as standard feature. An indirect way to do this is to create the image of the text and animate it bottom to top.

Animation: the visual appearance of a video and animations are same and hence a video is an animation and an animation is a video - to human eyes. Thus, the option to animate in a Video Editing program may be confusing initially. The feature 'animation' refers to ability to change few keyframes in the clip or the video such as zoom, pan or slide.

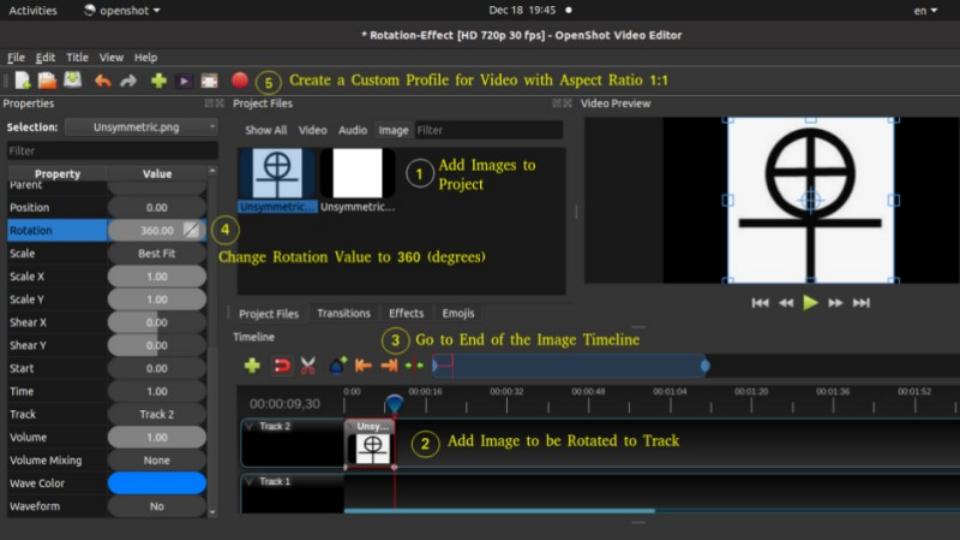

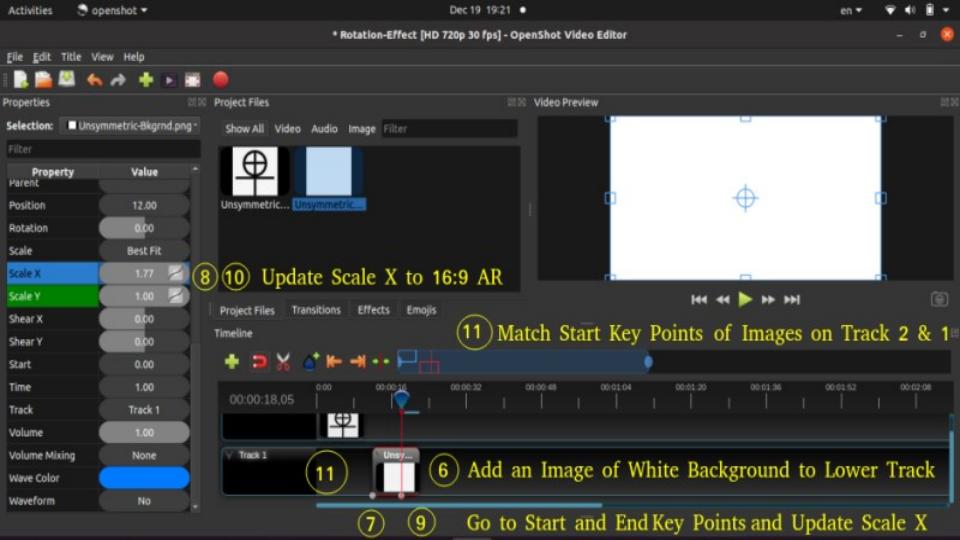

Example demonstration: Create a Zoom and Pan animation in OpenShot

- Step-1: Move the timeline where you want to begin to zoom

- Step-2: Right click on your clip, and choose Transform: this will display some interactive handles over the video preview

- Step-3: Drag the corner (while holding CTRL) to scale the image larger or smaller

- Step-4: Alternatively, use the Clip's properties to change the scale_x and scale_y, and location_x, and location_y properties

- Step-5: Drag the center circle handle to move the image

- Step-6: Move to the next position in the video, and repeat these steps.

Note that most of the 'rotation' codes are meant to rotate an images about z-axis which the axis perpendicular to the plane of computer screen. Rotation about x- and y-axis which are coplanar to computer screens requires homographic transformation which is a projective transformation between two planes. One example is available at answers.opencv.org/ ... /direct-formula-for-3d-rotation-done-by-warpperspective-or-remap. Note that this code is written in old opencv format and not compatible with latest cv2 and Python.

Audio and Video Codecs

The audio data is stored as matrix with rows corresponding to audio frames and columns corresponding to channels. There are other utilities in OCTAVE such as create and use audioplayer objects, play an audio, write audio data from the matrix y to filename at sampling rate fs, create and use audiorecorder objects, scale the audio data and play it at specified sample rate to the default audio device (imagesc vs. audiosc)....

Audio Codec: The processing of audio data to encode and decode it is handled by an audio codec . Bit rate - The higher the bit rate, the higher the quality can be. Some audio codecs are: Advanced Audio Coding (AMC), MP3, Pulse Code Modulation (PCM) of Voice Frequencies (G.711)... Some terms associated with Audio data format and structure are: Sample Size, Channel, Channel Count, Audio Forms, Waveforms, Stereo (2 audio channels)

Video Encoding: In early days of digital video, video files were a collection of still photos. For a video recorded at 30 frames per second, 30 photos per second of footage has to be created and stored. Video encoding is the process of converting video files to a digital files so that they are not saved as collection of individual images but as fluid images. Some of the most popular encoding formats include: MP4, MOV, AVI, QuickTime. Standard definition (SD video) - any recording or video below 720p is considered standard definition. For common resolutions of 720 and 1080, the naming convention is based on the total number of pixels running in a vertical line down the display area. For 2K, 4K or 8K video, the resolution is named for the number of pixels running in a horizontal line across the frame. FHD = 1080P (Full High Definition where 'P' stands for progressive scan and not for Pixels). QHD (Quad High Definition) is 2560x1440 pixels and 2K resolution is 2048 x 1080 pixels. UHD or 4K - Ultra High Definition resolution is technically 3840x2160 pixels.

Remuxing and Transcoding: Remuxing is process of changing the video container only, a lossless process where original audio and video data is kept unaltered. The opposite to remuxing is transcoding, which is about conversion of one encoding method to another. Transcoding changes the source data and hence can be a lossy process.

Frame rate (frames per second or fps - note that the term 'rate' refers to per unit time in most of the cases) is rate at which images are updated on the screen. For videos, sample rate is number of images per second and for audios, sample rate is number of audio waves per second. Number of frames in a video = fps × duration of the video. Note that some video formats do not store the frames as one frame after other and instead use delta frames where only the changes from one frame to next are stored. In these cases, Number of frames in a video = fps × duration of the video shall not hold true. The programs that are used for video file compression and playback are called codecs. Codec stands for coder and decoder. As in 2022, the best video codec is H.264. Other codecs available are MPEG-2, HEVC, VP9, Quicktime, and WMV.

| Video Format | Creator / Owner | Usage Recommendations |

| AVI | Microsoft | For playing video on Windows devices, large file size |

| AVCHD | --- | High quality but requires good hardware for playback |

| FLV & F4V | Adobe | Flash video formats, once popular now obsolete |

| MP4 (H.264) | Open-source | Wide compatibility and balance of file size and quality |

| MKV | Open-source | Stores wide range of video, audio, and subtitle tracks in one file |

| M4V | Apple | Similar to MP4 but protected by Apple’s FairPlay DRM copyright |

| MOV | Apple | Storing high-quality video files, and professional video editing |

| MPEG-2 | Open-source | Used for DVDs and sometimes for TV broadcasts |

| ProRes | Apple | High-definition video editing in Final Cut Pro |

| DNxHR, DNxHD | Avid Technology | high-quality video editing in Avid Media Composer |

| 3GP, 3G2 | Open-source | Offers small file sizes suitable for mobile viewing |

| OGV | Open-source | Ogg Video: used for web applications supported by HTML5 |

| WebM | Open-source | Designed for the web, good compression and video quality supported by HTML5 |

| WMV | Microsoft | Windows Media Video, optimized for Windows Media Player |

Image Editing using ImageMagick

ImageMagick is nearly an universal tool to open any format of image and convert into another format. In MS Windows, once you have added ImageMagick installation folder location into 'Path' variable, use magick.exe mogrify -format jpg *.heic to convert all images in HEIC format to JPG format. -quality 75 can be added to specify the quality level of output image. The value 75 specified here can be anywhere between 1 to 100 where 1 refers to the most compression and worst quality. To scale all PNG images in current folder: magick.exe mogrify -resize 540x360 *.png. The option -resize 540x keeps the height in proportion to original image and -resize x360 keeps the width in proportion to original image. Option -resize 540x360 is equivalent to min(540x,x360). To resize all images in current directory to 480 width (and height reduced proportionally): mogrify -resize 480x *.jpg and to resize to a height of 270px: convert input.jpg -geometry x270 output.jpg and to scale down images to 360 pixels: mogrify *.jpg -resize 360x360^ImageMagick provides two similar tools for editing and enhancing images: convert - basic image editor which works on one image at a time and mogrify - mostly used for batch image manipulation which by default overwrite the input images. Note that the output of both these two tools are not always the same. If you are using Windows OS, there is in-built 'convert' function. Hence, to use 'convert' utility, one need to use the command "C:\Program Files\ImageMagick-7.0.10-Q8\magick" convert input.png -crop 100x100 + 800+450 cropped.png. To crop from all sides, use -crop option twice with first pair denoting left-top values and the second pair specifies right-bottom crop magnitudes: "mogrify -crop +100+50 -crop -75-25 ./img_folder/*.png" where crop values are 100 from left, 50 from top, 75 from right and 25 from bottom. For single image: convert input.png -crop +100+50 -crop -75-25 cropped.png. To change format of images in a folder: convert *.TIF -set filename: "%t" %[filename:].jpg. To scale all image and store scaled images in a folder (no overwriting of the existing files): mogrify -resize 600x -path ./Scaled *.jpg - note that the specified folder (./Scaled here) must exist before running this statement.

Scale all images in a folder to specified dimension maintaining aspect ratio of original images

def resizePadImages(image, target_w, target_h, pad_color=(255, 255, 255)):

'''

Scale the image while maintaining the aspect ratio - fill extra space

in width and/or height direction with specified pad_color which has

default value of white that is RGB = (255, 255, 255).

'''

ht, wd = image.shape[:2]

size = (target_h, target_w)

scale_factor = min(target_w / wd, target_h / ht)

new_w, new_h = int(wd * scale_factor), int(ht * scale_factor)

resized_img = cv2.resize(image, (new_w, new_h), interpolation=cv2.INTER_AREA)

# Create a new image with the target size and fill it with the pad color

scaled_image = np.full((target_h, target_w, 3), pad_color, dtype=np.uint8)

# Center the resized image on the padded image

dx = (target_w - new_w) // 2

dy = (target_h - new_h) // 2

scaled_image[dy:dy+new_h, dx:dx+new_w] = resized_img

return scaled_imagedef scaleImagesFolder(input_folder, target_w, target_h, colr):

output_folder = os.path.join(input_folder, "scaled_images")

if not os.path.exists(output_folder):

os.makedirs(output_folder)

for file_name in os.listdir(input_folder):

if file_name.lower().endswith(('.png', '.jpg', '.jpeg')):

image_path = os.path.join(input_folder, file_name)

image = cv2.imread(image_path)

if image is not None:

scaled_padded_image = resizePadImages(image, target_w, target_h, colr)

output_path = os.path.join(output_folder, file_name)

cv2.imwrite(output_path, scaled_padded_image)

scaleImagesFolder('Img', 640, 360, (255, 255, 255))

Image and Video Editing using OpenCV

Convert images (PNG, JPG) to video (mp4, avi) - click on the link for Python script. Many a time the image files are named as img-1.png, img-2.png...img-9.png, img-10.png, img-11.png...img-20.png, img-21.png... Sorting these files may not results in desired order as img-10.png shall be next in alphabetical order after img-1.png. Following Python code can be used to rename these files by padding zeros.

def renamePaddingZeros(folderName, file_extn, n_zero, sep_char):

'''

Rename all files in specified folder with adding leading zero specified

by variable n_zero. It can handle any extension type so long there is

only 1 dot in the file name. It can also handle multiple occurrences

of separator in the file names. The code exits with user message if no

suitable file found.

file_extn: extension of file type such as JPEG, PNG (case sensitive)

n_zero: number of zeros to be padded

sep_char: separator character before the image number

'''

file_extn_x = "*." + file_extn

n_char_extn = len(file_extn) + 1

list_files = sorted (glob.glob(os.path.join(folderName, file_extn_x)))

if len(list_files) < 2 or n_zero < 2:

print("Invalid parameters or insufficient inputs. Exiting!\n")

sys.exit()

for file_name in list_files:

num = file_name[: -n_char_extn].split(sep_char)[-1]

prefix = file_name.rsplit(sep_char, 1)[0]

num = num.zfill(n_zero)

new_file_name = prefix + sep_char + num + "." + file_extn

os.rename (file_name, os.path.join(folderName, new_file_name))

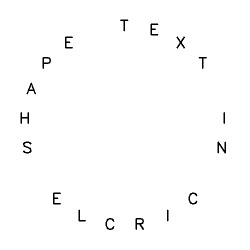

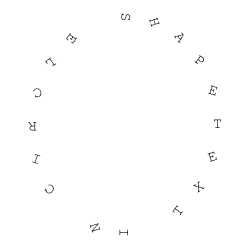

Add text in circular shape similar to 'Circle' text effect of in an Word Art in PowerPoint

def addTextCircularShape(image, text, center, radius, font_scale=1, color=(0, 0, 0), thk=2):

font = cv2.FONT_HERSHEY_SIMPLEX

# Calculate angle increment for even distribution

dq = 360 / len(text)

for i, char in enumerate(text):

# Add 270 to start from the top - 0 is at 3 o'clock position in CW

angle = i * dq + 270

angle_rad = np.radians(angle)

# Calculate coordinates on the circle

x = int(center[0] + radius * np.cos(angle_rad))

y = int(center[1] + radius * np.sin(angle_rad))

# Calculate text size to center properly

text_size, _ = cv2.getTextSize(char, font, font_scale, thk)

# Adjust coordinates to center text on the circle

dx = text_size[0] // 2

dy = text_size[1] // 2

cv2.putText(image, char, (x - dx, y + dy), font, font_scale, color, thk)

return image

To add the timer (time elapsed since video started playing), refer to this Python with OpenCV code. Timer can also be added using FFmpeg, scroll down for command line syntax. To add two videos side by side in width direction, refer to this Python + OpenCV code. Note that no padding (gap) between the two videos are added. To add two videos in vertical (up/down) direction, refer to this code. To add 4 videos in 2x2 box, refer to this Python + OpenCV code.. Compress video: ffmpeg -i in.mp4 -vcodec h264 -acodec mp2 out.mp4 or define an alias in Linux .basrc file: cmpvid() {ffmpeg -i "$1" -vcodec h264 -acodec mp2 output.mp4} where $1 is the name of input video and can be used as cmpvid in.mp4 on terminal.

To add 3 videos in a 2x2 row with fourth video (bottom-right) as blank video (video with white background), refer this Python + OpenCV + numPy code. In case the location of fourth video needs to be replaced with an image, refer this Python + OpenCV. Note none of these codes check existence of input specified in the code. These codes can be improvised by adding checks for missing input and option to provide inputs from command line. In case you want to add partition line(s), you may use this code.In case you are not able to play the video created after combining the 3 or 4 videos, try to scale down the input videos. The resultant height and width (twice the size of input videos) may not be displayed on the (laptop or computer) screen you are using.

Sometimes the frame rate per second (FPS) of the input videos needs to be adjusted to a common value. Use this Python+OpenCV code to change the FPS of a video.

Create Video by Rotating an Image: refer to this code.

Programs to edit videos: FFmpeg (written in C), OpenShot and its similar looking cousin program ShotCut, Blender [itself written in C/C++], Windows Video Editor, Movie Maker (not supported beyond Windows-10). FFmpeg is a command-line tool (though few GUI do exist). As per the website ffmpeg.org: "A complete, cross-platform solution to record, convert and stream audio and video." avconv - audio video converter, SimpleCV (a program similar to OpenCV and does not look to be maintained), imageio, MoviePy (uses FFmpeg, imageio, PIL, Matplotlib, scikit-image...), Vapory (library to render 3D scenes using the free ray-tracer POV-Ray), Mayavi, Vispy... HandBrake is a tool for converting video from nearly any format to a selection of modern, widely supported codecs.Excerpts from avconv manual page: avconv is a very fast video and audio converter that can also grab from a live audio/video source. It can also convert between arbitrary sample rates and resize video on the fly with a high quality polyphase filter."

Excerpts from MoviePy documentation: "MoviePy uses the software FFmpeg to read and to export video and audio files. It also (optionally) uses ImageMagick to generate texts and write GIF files. The processing of the different media is ensured by Python’s fast numerical library Numpy. Advanced effects and enhancements use some of Python’s numerous image processing libraries (PIL, Scikit-image, scipy, etc.)". Requires scikit-image for vfx.painting.

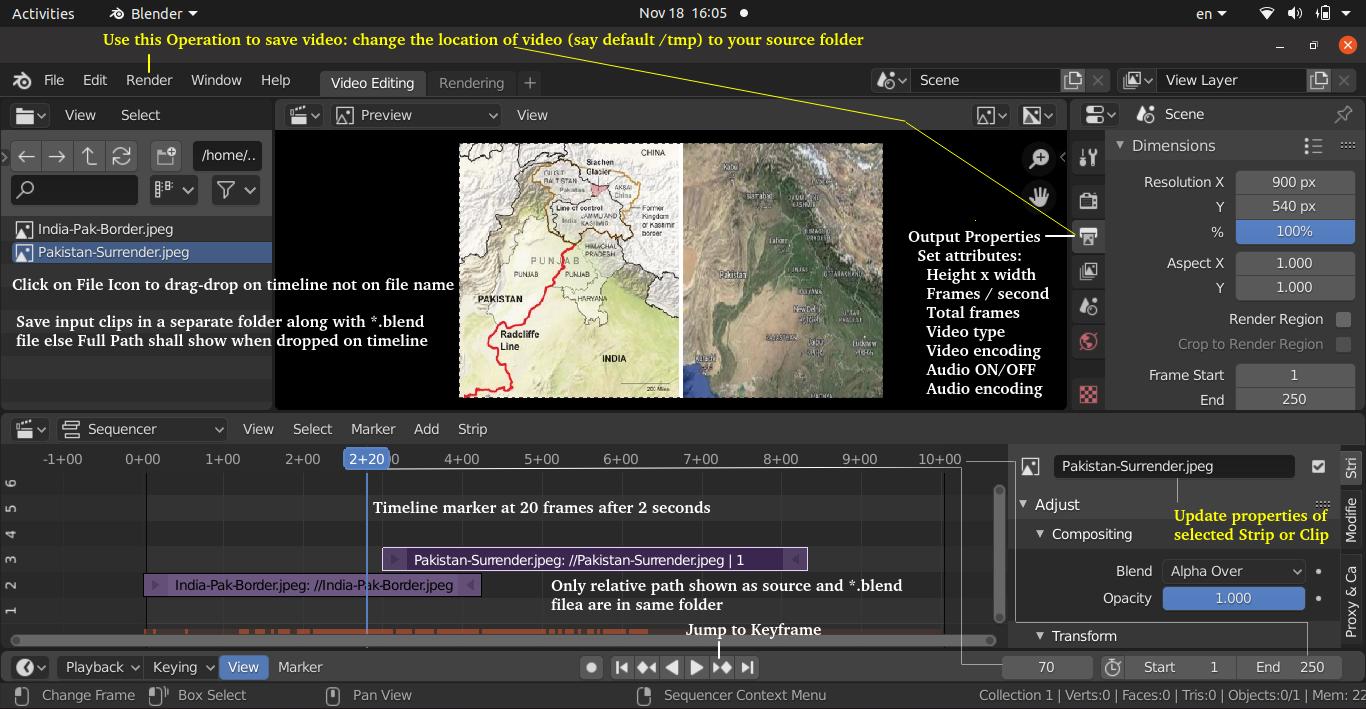

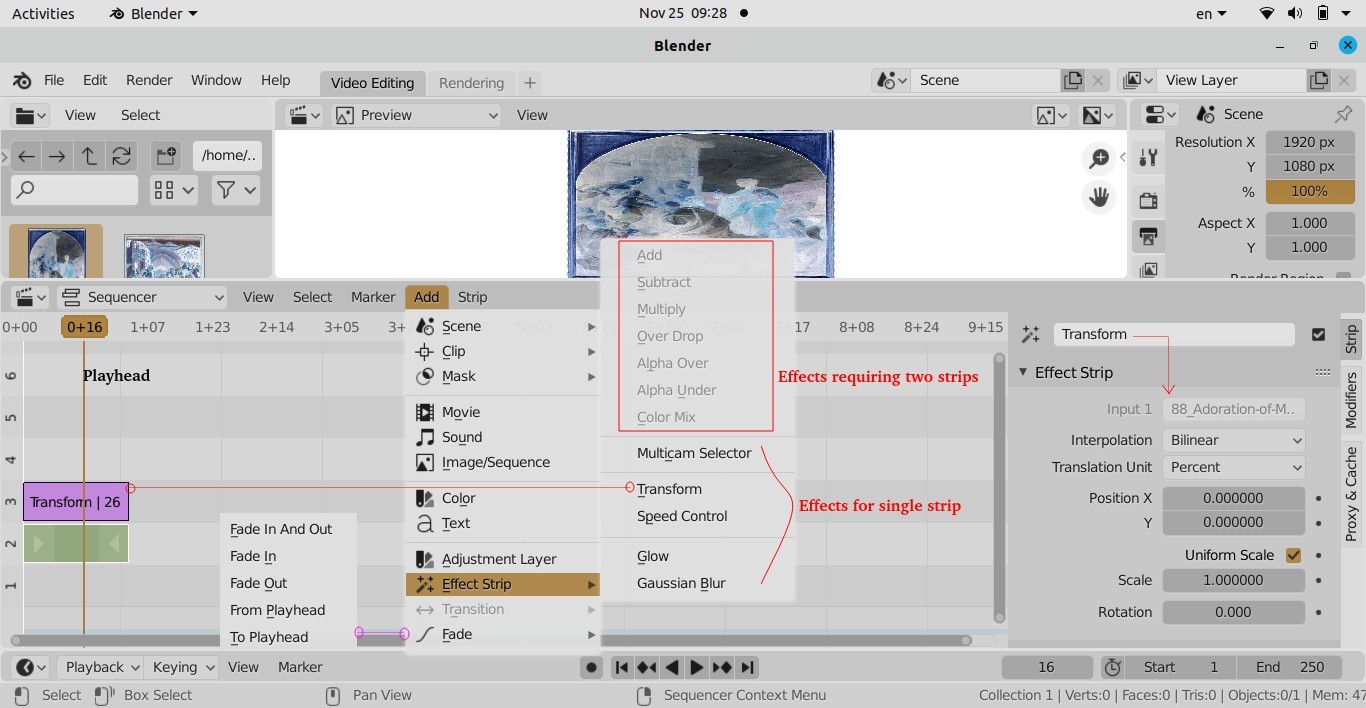

Few Tips for Video Editing:

- Review all your footages, images and clips (known as strips in Blender)

- Assemble (i.e. add footages, images, clips... on the timeline inside video editor) and create a rough cut

- Keep the clips long enough (in terms of time duration) to enable addition of various effects and transitions, the duration can be adjusted during finishing operations

- Add transition effects as last operations of the editing process

- Turn snapping of clips ON and OFF as needed

- If you are adding narration of an existing video, add a long transition where topics end - this will give you time to wrap up one topic and move to another

- Add few sections with text and background music - no voice content

- Use keyboard short cuts where a better control with mouse pointer is difficult

- To make end look exactly like start of a clip (also known as Time Symmetrization), make the clip play once forward and once backward.

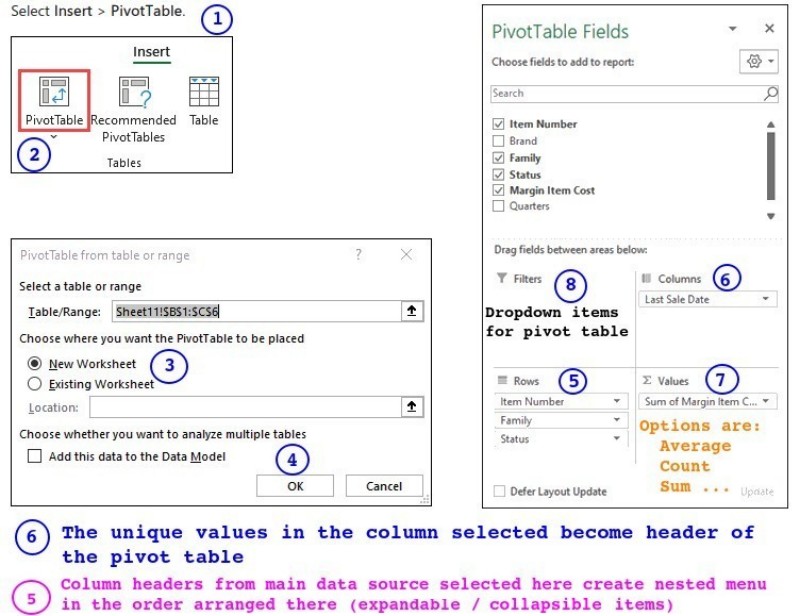

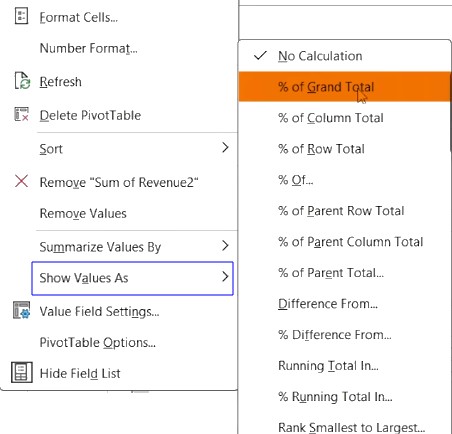

FFmpeg